Four Ways to Deal with Bots in eCommerce Sites

eCommerce sites have been dealing with a rise in bot traffic. In recent years, retailers have become more aware of the potential for data breaches and revenue degradation from bots, especially during the holiday season when there is more that is being sold on their sites. In fact, according to the 2021 Bad Bot Report, bad bot traffic now accounts for a quarter of all internet traffic.

That means 25% of all internet traffic is trying to price scrape, content scrape, create and take over accounts, hack credit card information, and more. While it’s impossible to eliminate all bot traffic, the situation can be managed and improved using a combination of machine learning and artificial intelligence.

In this post we’ll take a look at four ways to deal with bots in eCommerce sites. Let’s get started!

1. Finding Them Before They Find You

One of the most important roles for eCommerce retailers is to keep their customers safe, which includes keeping bots from accessing their site. The most effective way to do this is by finding them before they find you, which is where machine learning and artificial intelligence come in.

By mimicking user behavior, bad bots are often going undetected, which puts your site security at risk. Therefore, if you are concerned about your site’s security, it’s crucial that you have a comprehensive automated system that can detect bots before they enter your site. This can be done with software that uses machine learning and artificial intelligence to identify bot traffic on the site.

Bot management can come in various forms. These include JAVAScript and CAPTCHA challenges, which use behavior analysis to determine whether or not a bot is bad. Another form of management is using an “allowlist”, which collects a list of allowed bots.

A reCAPTCHA example from Hulu.com

An example of bot management software is Cloudflare’s bot management. According to the company, it controls bot traffic with “speed and accuracy by harnessing the data from the millions of Internet properties” on Cloudflare.

For more information on Cloudflare Bot Management, watch the video here.

2. Cutting Off Their Lifeline

Another important aspect of managing bot traffic and finding ways to deal with bots on eCommerce sites is to cut off their lifeline: making sure they don’t mess with the site’s performance. Bots often engage in activities that include web scraping, click fraud and content scraping – all of which can affect your site’s speed and performance.

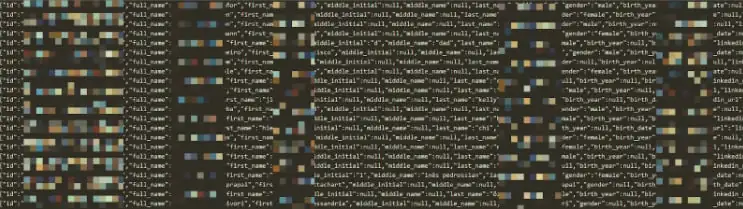

An example of data scraping in action this year comes from LinkedIn, who, in four months time, dealt with three major data scraping incidents that gathered data from 600 million users.

Image via cybernews.com – A sample from the scraped data

To avoid problems such as this, you’ll need a task management tool that lets you establish bot activity limits on different site functions, such as crawl speed.

In addition to this task management tool, you’ll also need a monitoring system that lets you identify and block bots from accessing your site, along with a blacklist of IP addresses associated with malicious activity. Be aware that some bots will change their “fingerprint” in order to appear more or less human, but by using machine learning and artificial intelligence you can still easily detect their presence.

If you’d like to learn more,UptimeRobot is an uptime monitoring software that offers 50 monitors with free five-minute checks. Learn about their free program and more here.

3. Getting Your Hands Dirty

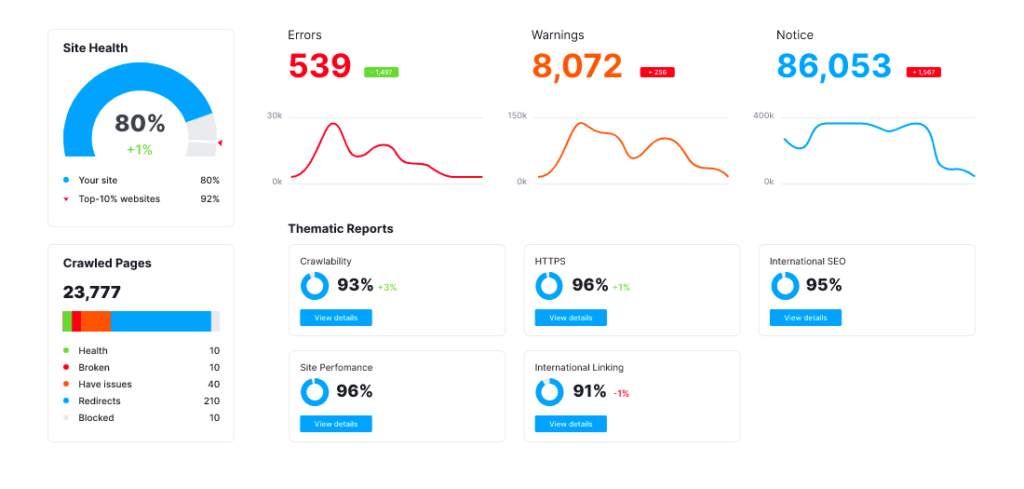

Another important step in fighting bots is to get your hands dirty so that you can start digging through data related to bot traffic. This includes things like identifying the number of different crawlers on your site at any given time, what type of content they are looking for, how much content is being scraped etc. This helps you to identify other sites where bots may be getting their data from and take action accordingly. You can achieve this by using tools that provide audit logs related to crawlers, IP addresses and access frequency.

To learn how your site is performing on different site crawlers, check out the free audit from SEMRush, which can crawl your site and provide a report on how your site’s performing.

Audit reporting from SEMRush

4. Leveraging AI to Stay One Step Ahead of the Bots

While there is no way to completely rid your site of bots, you can take proactive steps to minimize their impact on your bottom line. This includes leveraging AI and machine learning in order to stay one step ahead of the bots.

At a glance, this means using tools that provide information related to bot traffic including background information, historical data, site logs and previous bot behavior. This way you can easily block bots from accessing your site before they do any damage.

To learn more about how AI can help you proactively protect your site from bad bots, check out the free eBook from DataVisor.

Trust OuterBox to Help Your eCommerce Website

If there’s one thing we’re learning this year about bad bots, it’s that they’re getting more powerful. But, by using all of these tactics in combination with each other, you should be able to significantly cut down on the number of bots that access your site and reduce their potential for damage. This, in turn, will help you to increase conversions and secure online revenue.

For more information on types of bad bots to protect your site from, consider reading the Automated Threat Handbook from Open Web Application Security Project (OWASP).

OuterBox can help your business unlock its potential through results-driven eCommerce website design, development, and online marketing services. Get a free estimate today.